Here is the question every technical team is asking right now: Is our AI system actually high-risk under the EU AI Act — or are we overcomplicating this? It is the right question to ask. Getting the classification wrong in either direction has serious consequences.

Under-classify a high-risk system, and you face fines up to €15 million or 3% of global annual turnover, plus potential market withdrawal. Over-classify a minimal-risk system, and you waste months of engineering and legal resources on obligations that simply don’t apply to you.

The good news is that the EU AI Act’s classification framework is structured and systematic. It is not a vague judgment call. However, it does require careful analysis — because the classification depends not just on what your AI does, but how it does it, who it affects, and what decisions it influences.

“Classification is the foundation of everything. Get it right, and your compliance program is efficient and targeted. Get it wrong, and every subsequent investment may be misdirected — or dangerously insufficient.”

— European AI Office Technical Classification Guidance, 2025

This guide is built for technical teams, product managers, legal counsel, and compliance officers who need to make definitive, defensible classification decisions. We cover every risk tier, walk through the eight Annex III sectors in detail, explain the GPAI classification rules, and provide a practical decision framework you can apply to your systems today.

This article is part of our EU AI Act Compliance Guide — the full pillar resource covering all compliance requirements, timelines, and enforcement details. If you need the broader context, start there. If you need to classify your AI system right now, you are in the right place.

Let’s work through this systematically.

How the EU AI Act Classification Logic Works

Before diving into individual tiers, it helps to understand the overall logic the Act uses. The EU AI Act does not classify AI systems by technology type, algorithm family, or data modality. Instead, it classifies by potential harm to people. This distinction matters enormously for technical teams who may instinctively reach for a technical definition.

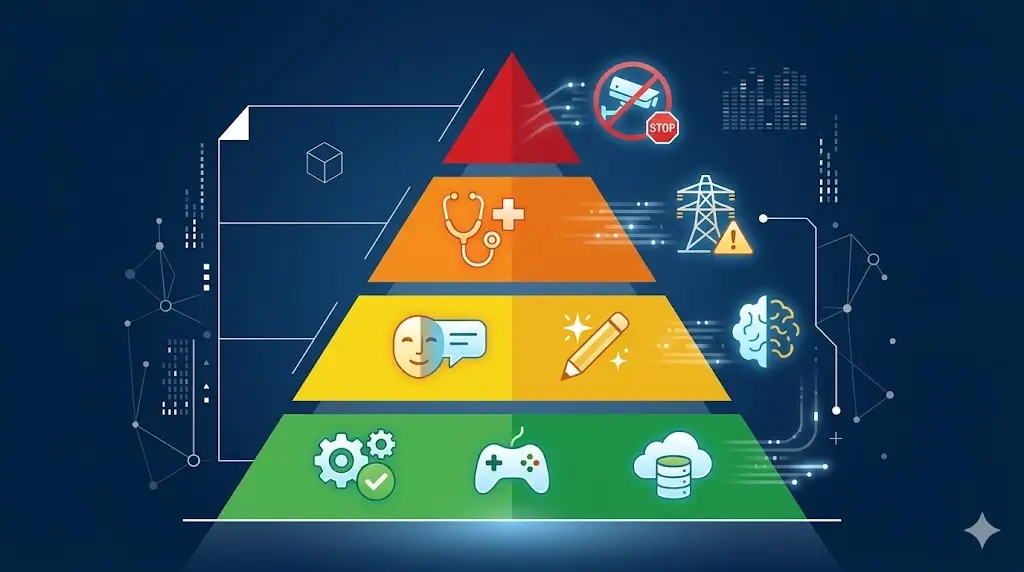

The Four Risk Tiers at a Glance

The EU AI Act establishes four risk tiers, each with distinct legal consequences. Understanding these tiers at a high level first makes every subsequent classification decision easier to navigate.

| Tier | Label | Legal Status | Core Obligation | Max Penalty |

|---|---|---|---|---|

| 1 | Unacceptable Risk | Prohibited — illegal to use | Cease immediately | €35M / 7% turnover |

| 2 | High Risk | Permitted with strict requirements | 7-requirement compliance framework | €15M / 3% turnover |

| 3 | Limited Risk | Permitted with transparency rules | Disclose AI nature to users | €7.5M / 1.5% turnover |

| 4 | Minimal Risk | Permitted — no mandatory obligations | Voluntary codes of conduct | No mandatory penalty |

Additionally, the Act introduces a fifth, cross-cutting category for General Purpose AI (GPAI) models. GPAI classification sits alongside — not inside — the four tiers. A GPAI model can also be deployed in ways that trigger high-risk classification. We address this in Section 5.

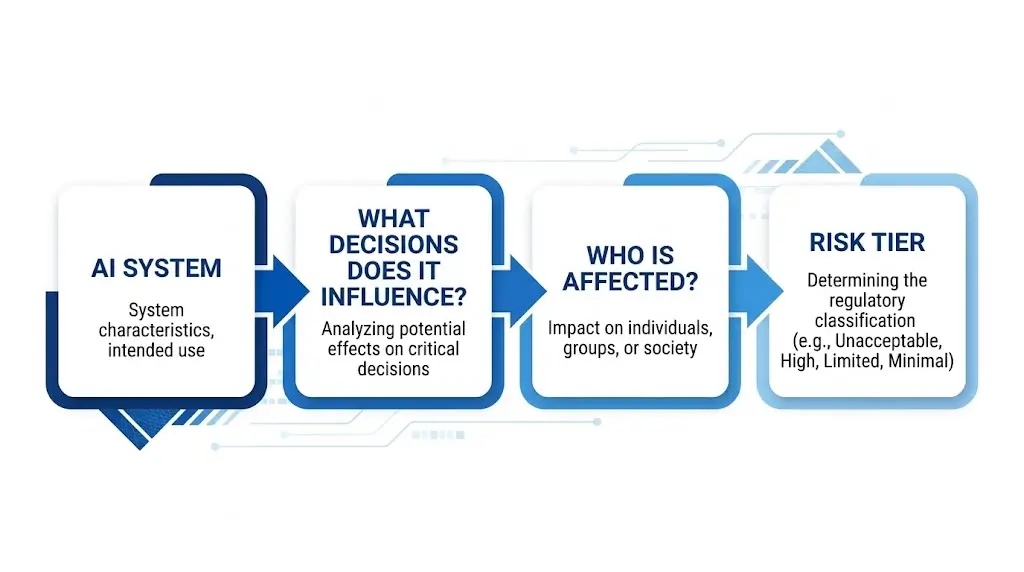

The Two Questions That Drive Every Classification

At its core, every classification decision under the EU AI Act comes down to two fundamental questions. First: What sector does this AI operate in? Second: What decisions does this AI influence, and how consequential are those decisions for the people involved?

Sector alone is not determinative. Equally, the nature of the decision alone is not determinative. Both factors must be present for high-risk classification. Specifically, the Act requires that an AI system operate within a listed Annex III sector and make or meaningfully influence consequential decisions about individuals.

For example, consider two AI systems deployed in a hospital. An AI that schedules operating rooms operates in the healthcare sector. However, it does not make decisions about patient diagnosis or treatment. Consequently, it is likely minimal-risk. By contrast, an AI that recommends diagnostic paths based on patient symptoms operates in the same sector — but now influences consequential clinical decisions about individual patients. Therefore, it is high-risk.

Why Function Matters More Than Form

Technical teams sometimes misclassify AI systems by focusing on what the technology is rather than what it does. The EU AI Act does not care whether your system uses a transformer architecture, a gradient-boosted classifier, or a rule-based decision tree. It cares about the system’s intended purpose and its real-world effect on individuals.

Therefore, a simple logistic regression model used to make credit decisions is high-risk. Conversely, a sophisticated deep learning model used to optimize warehouse pick routes is minimal-risk. The complexity of the technology is irrelevant. The impact on people’s rights and opportunities is everything.

Furthermore, the Act classifies based on intended use — but also considers reasonably foreseeable use. If you build a general-purpose AI tool and it is reasonably foreseeable that deployers will use it in a high-risk context, that foreseeability is part of your classification analysis as a provider.

Tier 1: Prohibited AI — The Complete Banned List

Before classifying into the other tiers, every organization must first check whether any of their AI systems fall into the prohibited category. These practices have been illegal since February 2, 2025. If you identify any, you need immediate legal intervention — not a compliance roadmap.

All Six Prohibited Practices Explained

The EU AI Act bans six specific categories of AI practice outright. Here is what each one means in technical and operational terms.

1. Subliminal manipulation systems. AI that influences human behavior through techniques operating below the threshold of conscious perception — exploiting subconscious biases, fears, or desires to cause behavior the person would not choose if fully aware. This includes AI-driven dark patterns engineered to exploit cognitive vulnerabilities at scale, not simply persuasive interfaces.

2. Exploitation of vulnerabilities. AI that deliberately targets individuals based on known vulnerabilities — including age, disability, social disadvantage, or mental health conditions — to manipulate their decisions in ways that harm their interests. Consequently, AI systems that use profiling to target elderly users with financially harmful nudges fall here.

3. Social scoring by public authorities. AI that enables government or public bodies to evaluate citizens based on their behavior, social interactions, or personal characteristics and then restrict their access to services, opportunities, or freedoms based on that score. This is the “Chinese social credit system” prohibition, extended to any EU public authority deploying similar systems.

4. Real-time remote biometric identification in public spaces. AI that identifies individuals in real time through biometric data — primarily facial recognition — in publicly accessible spaces. The key qualifier is “real-time.” Furthermore, there are narrow law enforcement exceptions, but only with prior judicial authorization and for specific serious crimes.

5. Predictive policing based on profiling. AI that assesses the likelihood of an individual committing a crime based solely on personal characteristics, social circumstances, or behavioral profiling — without specific evidence of a planned or committed crime. Risk assessment tools based on demographic profiles fall into this category.

6. Unauthorized biometric categorization. AI that infers sensitive attributes — race, political opinion, religious beliefs, sexual orientation, trade union membership — from biometric data, unless specifically authorized for narrow law enforcement purposes under strict conditions.

Edge Cases and Common Misunderstandings

Several legitimate AI use cases sit close to these prohibitions without crossing them. Understanding the boundaries prevents both over-restriction and under-restriction.

For instance, post-hoc biometric identification in law enforcement — reviewing existing footage after a crime — is not prohibited by default. The prohibition targets real-time identification. However, even post-hoc identification requires careful legal authorization analysis under national law and the Act’s narrow exemptions.

Similarly, fraud detection AI that uses behavioral signals is not the same as prohibited social scoring. Fraud detection is transactional and specific, not a general evaluation of a person’s social worth. Nevertheless, if your fraud AI begins generating persistent “risk profiles” that affect multiple future decisions unrelated to the original transaction, you approach prohibited territory.

Additionally, personalization algorithms that adjust content or offers based on user preferences are not subliminal manipulation unless they specifically exploit psychological vulnerabilities to cause harm. Standard marketing personalization sits outside the prohibition — but AI engineered to exploit addiction-like psychological patterns may not.

⚠ Legal Alert

If any system in your AI inventory appears to match a prohibited practice, do not attempt to classify it as lower-risk or restructure it without qualified legal counsel. The prohibition applies regardless of intent, business purpose, or technical framing. Seek legal advice before making any product or system changes.

Tier 2: How to Determine If Your AI Is High-Risk

High-risk classification is where the most important — and most contested — classification decisions happen. This is the tier affecting the most businesses, carrying the most compliance obligations, and subject to the August 2026 enforcement deadline. Getting this right matters enormously.

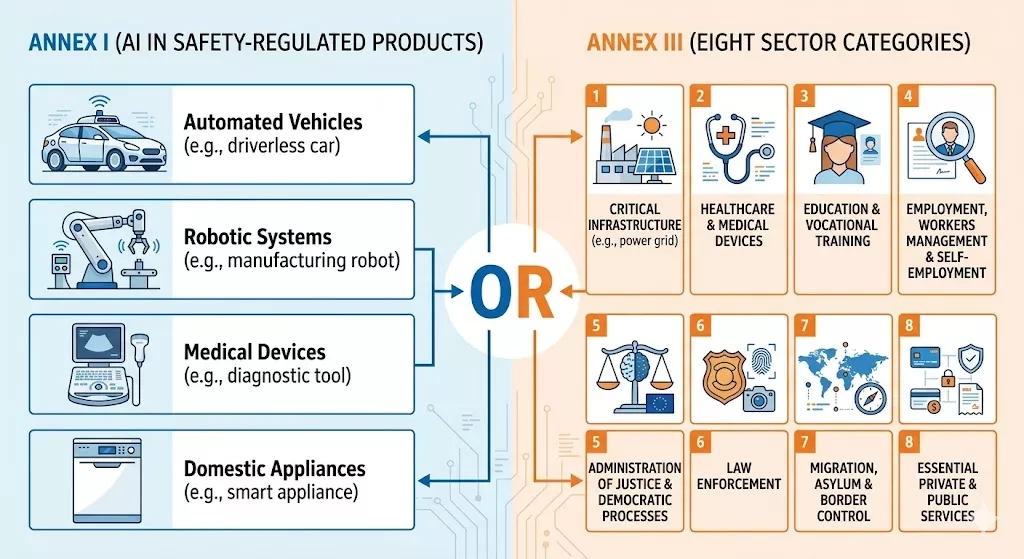

There are two separate pathways to high-risk classification. Your AI system is high-risk if it meets the criteria of either Annex I or Annex III. Furthermore, a system can qualify under both annexes simultaneously.

Annex I: AI in Safety-Regulated Products

Annex I covers AI systems that are either a safety component of, or themselves constitute, products already regulated under EU product safety legislation. If your AI is embedded in any of the following product categories, it qualifies as high-risk under Annex I — regardless of what specific function it performs:

- Machinery (Machinery Regulation)

- Medical devices and in vitro diagnostic medical devices (MDR / IVDR)

- Lifts and their safety components

- Equipment and protective systems for use in potentially explosive atmospheres

- Radio equipment (Radio Equipment Directive)

- Pressure equipment

- Recreational craft and personal watercraft

- Cableway installations

- Agricultural and forestry tractors

- Civil aviation safety systems (EASA-regulated)

- Two- and three-wheel vehicles and quadricycles

- Motor vehicles (type approval)

- Railway systems (interoperability and safety)

Importantly, Annex I systems face the 2027 deadline for systems already on the market before August 2026. However, new Annex I systems placed on the market after August 2026 must comply immediately. Moreover, the regulatory alignment with existing EU safety legislation means many conformity assessment procedures can be integrated with existing CE marking processes.

Annex III: The Eight High-Risk Sectors (Deep Dive)

Annex III is the classification pathway that affects the broadest range of businesses. It covers eight sectors where AI can significantly impact people’s fundamental rights, employment, or access to essential services. For each sector, we explain exactly what qualifies — and what does not.

Sector 1: Biometric identification and categorization. This covers AI that identifies individuals based on biometric data — facial features, fingerprints, iris patterns, gait, voice — or that categorizes individuals into groups based on protected characteristics inferred from biometrics. The key qualifier: the system must involve real individuals, not anonymized datasets used for research.

Sector 2: Critical infrastructure management. AI that manages or controls critical infrastructure — electricity grids, water supply systems, gas networks, transportation networks, digital infrastructure, and financial markets — falls here. Specifically, the high-risk classification applies when the AI makes or influences operational decisions about the infrastructure itself, not merely provides analytics.

Sector 3: Education and vocational training. AI that determines access to educational institutions, allocates students to programs, evaluates learning outcomes, monitors student engagement or behavior, or makes decisions about student progression qualifies as high-risk. Adaptive learning tools that personalize content without making access or progression decisions typically fall outside this tier.

Sector 4: Employment and workforce management. This is the sector attracting the most immediate regulatory attention. High-risk AI includes CV screening and ranking tools, interview analysis systems, workforce monitoring and productivity scoring, promotion and succession planning AI, and termination risk scoring tools. The qualifying criterion is that the AI influences decisions about employment, including access to employment and working conditions.

Sector 5: Access to essential private and public services. Credit scoring AI, loan application processing, insurance underwriting tools, social benefit eligibility systems, and emergency services dispatch AI all fall here. The unifying theme is that the AI influences whether individuals can access services they need. Consequently, pricing optimization tools that affect insurance premiums for individual customers also qualify.

Sector 6: Law enforcement. AI used in policing and criminal justice — risk assessment tools for recidivism, crime hotspot prediction, evidence analysis, polygraph-like behavioral analysis, and witness or suspect profiling — falls into this sector. Additionally, lie detection or emotional state assessment in investigative contexts qualifies regardless of the underlying technology.

Sector 7: Migration, asylum, and border management. AI systems used in border control processes — risk scoring for travelers, visa application assessment, asylum claim processing, and border monitoring — are high-risk. Furthermore, AI that assists in verifying documents at borders or assessing individual risk profiles for immigration purposes qualifies.

Sector 8: Administration of justice and democratic processes. AI that assists courts in legal research, sentencing recommendations, or case outcome prediction is high-risk. Similarly, AI used in election management, voter registration, or political campaign targeting that could influence democratic processes falls into this sector.

The Decision Impact Test: When Sector Alone Is Not Enough

Operating in one of the eight Annex III sectors is a necessary but not always sufficient condition for high-risk classification. In 2023, the EU legislators amended the Act to add an important qualifier: the AI system must make or significantly influence decisions that have a meaningful impact on individuals’ fundamental rights, safety, or access to opportunities.

This “decision impact test” means you need to ask a second question for every AI system in an Annex III sector: Does this system make or meaningfully influence individual-level decisions with real consequences?

For example, an AI analytics dashboard in a hospital that provides aggregate statistics about patient outcomes to hospital management does not make individual patient decisions. Therefore, despite operating in the healthcare sector, it likely falls outside high-risk classification. However, an AI that generates individualized clinical decision support recommendations that clinicians consult before treatment decisions does influence individual-level outcomes — and is therefore high-risk.

Classification Insight

The European AI Office has clarified that “significantly influences” means the AI output plays a substantive role in the decision-making process — not merely provides background information among many other sources. If a human decision-maker regularly relies on the AI’s output as a primary input, the AI system significantly influences the decision, even if a human makes the final call.

Real-World Classification Examples: High-Risk vs. Not High-Risk

Abstract principles only get you so far. Here are concrete classification examples across common AI deployment scenarios, showing how the decision impact test applies in practice.

| AI System | Sector | Decision Impact? | Classification |

|---|---|---|---|

| CV screening tool that ranks candidates for HR review | Employment (Annex III.4) | Yes — influences access to job opportunities | High-Risk |

| Employee scheduling optimization tool | Employment (adjacent) | No individual-level access/rights decisions | Minimal-Risk |

| Credit scoring model for loan applications | Essential services (Annex III.5) | Yes — determines access to financial services | High-Risk |

| Fraud transaction detection model | Financial (adjacent) | Transactional flag — human review required for account action | Limited/Minimal |

| AI diagnostic imaging reader (radiology) | Medical device (Annex I) | Yes — influences clinical diagnosis | High-Risk |

| Hospital bed allocation optimization AI | Healthcare (adjacent) | Operational, not individual clinical decisions | Minimal-Risk |

| AI proctoring system monitoring exam integrity | Education (Annex III.3) | Yes — influences assessment outcomes for students | High-Risk |

| Personalized learning content recommendation | Education (adjacent) | No access/progression decisions made | Minimal-Risk |

| Insurance underwriting AI for individual policies | Essential services (Annex III.5) | Yes — influences access to and pricing of insurance | High-Risk |

| AI chatbot for customer service in a bank | Financial (adjacent) | No individual credit or access decisions | Limited-Risk |

Tier 3 and Tier 4: Limited Risk and Minimal Risk

Once you have confirmed your AI system is not prohibited and does not qualify as high-risk, the remaining question is whether it falls into the limited-risk or minimal-risk tier. The distinction between these two tiers determines whether you have any mandatory obligations at all.

What Limited-Risk AI Must Do

Limited-risk AI systems face transparency obligations only. These obligations are narrow in scope but must be implemented deliberately. The core principle is that users must know when they are interacting with an AI system or when AI is assessing them.

Three specific categories of AI fall into the limited-risk tier by default. First, conversational AI and chatbots — any system that interacts with humans through natural language must disclose its AI nature at the start of the interaction, unless the context makes this obvious. A clearly branded AI assistant on a website may satisfy this implicitly, but a chatbot pretending to be a human customer service agent does not.

Second, AI-generated synthetic content — text, images, audio, and video that appear authentic but are AI-generated must be labeled as machine-generated content. This applies directly to deepfake video and audio, AI-generated news articles, and synthetic media used in advertising. Furthermore, it applies to AI-generated images used commercially, unless clearly labeled as creative AI art.

Third, emotion recognition and biometric categorization systems — if your AI system assesses an individual’s emotional state, personality, or behavioral patterns, you must inform the affected individual before or at the time of assessment. Marketing AI that infers consumer emotional states from facial micro-expressions during video advertising falls here.

Importantly, limited-risk transparency obligations are not trivial to implement well. You need user interface design decisions, clear disclosure language, and in some cases legal review to ensure disclosures are meaningful rather than buried in terms of service.

Minimal Risk: The Majority of Commercial AI

Minimal-risk AI faces no mandatory compliance obligations under the EU AI Act. However, the European Commission encourages voluntary adherence to codes of conduct and industry best practices. Consequently, many organizations building minimal-risk AI still choose to implement internal governance frameworks — both for ethical reasons and as preparation for potential future regulatory changes.

Examples of minimal-risk AI are broad and varied. Spam and malware filters, recommendation engines for entertainment and e-commerce, AI-powered search ranking (in non-employment, non-credit contexts), productivity AI tools, inventory forecasting, predictive maintenance, and the vast majority of enterprise data analytics tools all fall here.

Furthermore, most internal business intelligence AI — sales forecasting, demand planning, churn prediction for business accounts — sits in the minimal-risk tier, provided it does not make or influence decisions about individual people’s rights, access, or fundamental interests.

GPAI Classification: Rules for Foundation Models and LLMs

General Purpose AI (GPAI) classification operates differently from the four risk tiers. Rather than replacing tier classification, it adds a layer of obligations on top of whatever tier your AI system occupies. Understanding this parallel classification track is essential for any organization building with or deploying foundation models.

What Qualifies as a GPAI Model?

The EU AI Act defines a GPAI model as an AI model that has been trained on large amounts of data at scale, exhibits significant generality, and can perform a wide range of distinct tasks. In practice, this covers large language models (LLMs), multimodal models that process text and images, code generation models, and other foundation models.

Specifically, the classification applies to the underlying model — not the application built on top of it. Therefore, if you fine-tune or deploy an open-source LLM for a specific use case, you are a deployer of a GPAI model. However, you are not the GPAI provider unless you trained or substantially modified the underlying model weights.

This distinction has important compliance implications. GPAI providers carry the primary obligations for the base model. Deployers who build applications on top of GPAI models carry their own obligations — including high-risk obligations if their specific use case qualifies — but they rely on the GPAI provider for base-level model documentation and copyright compliance.

Systemic Risk Threshold: The 10²⁵ FLOPs Rule

Not all GPAI models face the same obligations. The EU AI Act distinguishes between standard GPAI models and those deemed to pose systemic risk. The threshold for systemic risk is training compute exceeding 10²⁵ floating point operations (FLOPs).

For context, this threshold currently covers the largest frontier models — GPT-4 class systems, Gemini Ultra-class systems, and similar large-scale foundation models. Most fine-tuned or smaller open-source models fall below this threshold. Additionally, the European AI Office has the authority to designate specific models as systemic-risk based on capability evaluations, even if they don’t technically exceed the compute threshold.

| GPAI Category | Threshold | Key Obligations |

|---|---|---|

| Standard GPAI | Below 10²⁵ FLOPs | Technical documentation, EU copyright law compliance for training data, transparency to downstream deployers |

| Systemic Risk GPAI | Above 10²⁵ FLOPs (or designated by EU AI Office) | All standard obligations + adversarial testing (red-teaming), incident reporting to EU AI Office, cybersecurity measures, energy consumption reporting |

GPAI and High-Risk: How They Interact

The most common source of confusion in GPAI classification is how GPAI status interacts with high-risk tier classification. The answer is that they operate simultaneously and additively.

Consider a company that fine-tunes an open-source LLM for use in a CV screening application. First, the underlying model may be a GPAI — but the company is a deployer, not the GPAI provider, so standard GPAI obligations fall primarily on the original model developer. Second, however, the CV screening application itself qualifies as a high-risk AI system under Annex III.4 (employment). Therefore, the deploying company must meet all high-risk AI obligations for the application layer.

Consequently, companies building specialized applications on top of GPAI models must independently analyze whether their application-level use case triggers high-risk classification — regardless of the GPAI status of the underlying model.

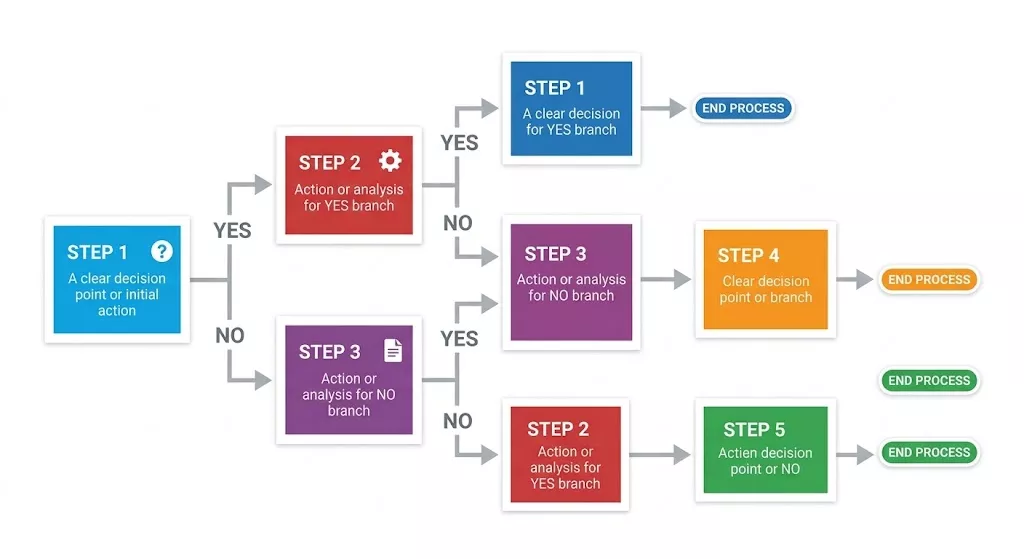

Step-by-Step Classification Decision Framework

Use the following sequential framework to classify any AI system in your inventory. Work through each step in order. Stop at the first step that yields a definitive classification — you do not need to continue through subsequent steps.

Step 1: Scope Check — Does the EU AI Act Apply at All?

Before classifying risk tier, confirm the system is actually within the Act’s scope. Ask the following questions:

- Is this system an “AI system” as defined by the Act? (Any machine-based system that processes inputs to generate outputs like predictions, recommendations, decisions, or content that influences real or virtual environments.)

- Does the system affect individuals in EU member states, either directly or indirectly?

- Is it used for commercial or professional purposes, not purely personal or scientific research purposes?

If all three answers are yes, the Act applies and you proceed to Step 2. If any answer is no, the Act may not apply — but document your reasoning carefully, since scope determinations are auditable.

Step 2: Prohibited AI Check

Next, check whether the system matches any of the six prohibited practices. Ask: does this system use subliminal manipulation techniques? Does it exploit psychological vulnerabilities to cause harm? Does it enable social scoring by a public authority? Does it perform real-time biometric identification in public? Does it make predictions about criminal behavior based purely on profiling? Does it infer protected characteristics from biometric data without lawful authorization?

If the answer to any question is yes, the system is prohibited. Do not proceed further. Seek qualified legal counsel immediately and cease operation or development of the system pending that advice.

Step 3: GPAI Check

Determine whether the system is or incorporates a General Purpose AI model. Ask: was this model trained on large-scale, broadly applicable data to perform a wide range of tasks? If yes, record GPAI status and determine whether compute training exceeded 10²⁵ FLOPs (systemic risk threshold). Continue to Step 4 — GPAI status runs in parallel with tier classification, not instead of it.

Step 4: High-Risk Check (Annex I and III)

This step has two parts. First, for Annex I: is this AI a safety component of, or does it constitute, a product regulated by EU safety legislation (machinery, medical devices, aviation, automotive, etc.)? If yes, the system is high-risk under Annex I.

Second, for Annex III: does this AI operate within any of the eight Annex III sectors? If yes, apply the Decision Impact Test: does the AI make or meaningfully influence individual-level decisions with real consequences for people’s rights, opportunities, or access to essential services? If both conditions apply, the system is high-risk under Annex III.

Step 5: Limited-Risk or Minimal-Risk Check

If the system is not prohibited and not high-risk, determine whether it falls into limited-risk. Ask: is this system a chatbot or conversational AI that users might not immediately recognize as AI? Does it generate synthetic content that resembles authentic human-generated content? Does it assess individuals’ emotional states or infer personal characteristics?

If any answer is yes, the system is limited-risk and requires transparency obligations. If all answers are no, the system is minimal-risk with no mandatory obligations under the Act.

Documenting Your Classification Decision

Critically, your classification decision must be documented — regardless of outcome. Regulators can ask you to demonstrate the reasoning behind your classification. Therefore, for each AI system in your inventory, create a classification record that includes the system name and description, the intended use and deployment context, the classification tier reached, the specific Annex III sectors checked and why each was accepted or rejected, the Decision Impact Test analysis for any Annex III systems, and the names of the people who made the classification decision and when.

Additionally, set a review trigger. Your classification must be re-evaluated any time the system’s intended purpose changes, it is deployed in a new context, a significant model update is made, or new guidance from the European AI Office is issued on relevant sectors.

✓ Classification Documentation Checklist

- AI system name, version, and brief technical description

- Intended purpose and primary use cases documented

- All EU member states where the system is deployed or accessible

- Prohibited AI check completed with written outcome

- GPAI status assessed, compute estimate recorded if applicable

- Each Annex III sector checked individually with accept/reject reasoning

- Decision Impact Test analysis completed for any Annex III sector hits

- Final classification tier recorded with supporting rationale

- Classification date and names of responsible team members

- Next scheduled review date established (recommend: quarterly or on material change)

Borderline Cases and How to Handle Them

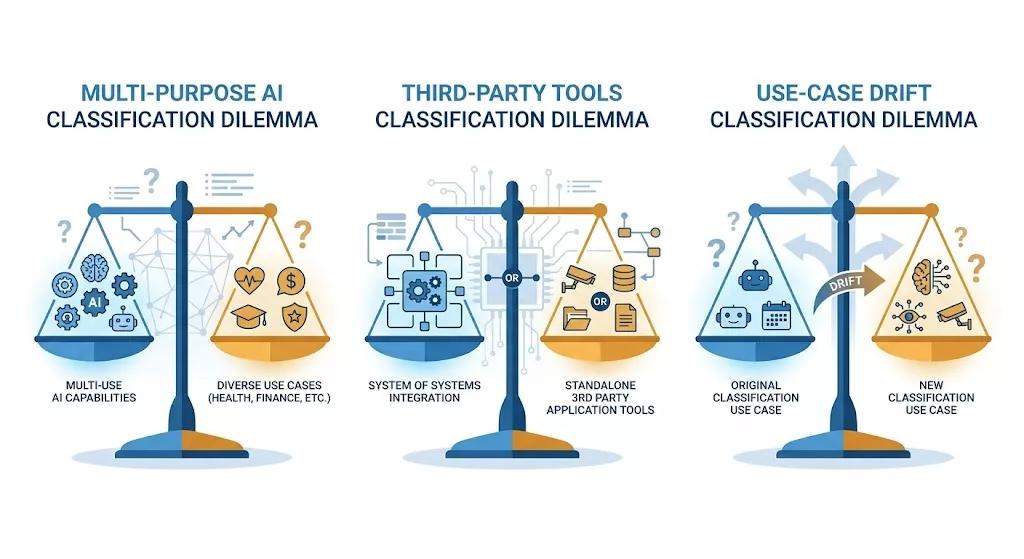

Even with a systematic decision framework, certain scenarios create genuine classification ambiguity. Here are the three most common borderline scenarios technical and compliance teams encounter — and how to navigate each one.

Multi-Purpose AI Systems

Many modern AI systems are genuinely multi-purpose. A large enterprise NLP model might simultaneously power customer service chatbots (limited-risk) and internal HR analysis tools (potentially high-risk). The classification question is: how do you classify the system as a whole?

The EU AI Act takes a function-level view. Therefore, a multi-purpose AI system must be classified separately for each distinct use case or deployment context. The highest-risk use case determines the most stringent obligations that apply. However, you only need to implement high-risk compliance obligations for the components or deployment contexts that actually qualify as high-risk — not for the entire system uniformly.

In practice, this means you need a clear technical architecture that separates high-risk functions from lower-risk functions — or accept that the entire unified system must meet the highest applicable tier’s requirements.

Third-Party AI Tools Your Team Deploys

As a deployer of third-party AI tools, you bear deployer obligations — including the obligation to verify that the tools you deploy are appropriately classified and compliant. You cannot simply rely on a vendor’s assurance that their tool is minimal-risk without checking the actual use case in your specific deployment context.

For example, suppose your company licenses a general-purpose AI writing assistant and uses it to generate performance review summaries that HR managers then use to make promotion decisions. The original tool provider classified it as minimal-risk for general productivity use. However, your specific deployment creates a high-risk use case under Annex III.4 (employment). Consequently, you as deployer bear responsibility for that classification and its compliance obligations.

Therefore, always evaluate third-party AI tools not just on their vendor’s classification, but on how you actually use them in your specific operational context. Then document that analysis as part of your classification record.

Use-Case Drift: When Classification Changes Over Time

AI systems evolve. A tool initially deployed for minimal-risk analytics may gradually become a primary input for high-stakes decisions — through feature additions, workflow integrations, or simply changing how teams rely on the output. This “use-case drift” can change a system’s classification without anyone formally deciding to reclassify it.

To address this risk, establish periodic classification reviews — at minimum annually, and triggered by any material change in how the system is used. Additionally, train your product and engineering teams to recognize when a system’s decision impact is increasing in ways that may trigger reclassification. Building classification review triggers into your product development lifecycle — alongside security reviews and privacy impact assessments — is the most effective structural solution.

Case Study: Use-Case Drift in Practice

A B2B SaaS Analytics Platform (Illustrative)

A workforce analytics SaaS company originally deployed their AI as a dashboard tool showing aggregate team productivity metrics. Initial classification: minimal-risk. In 2025, they added a feature that generates individual employee “performance scores” visible to HR managers, which managers then use as primary input for performance review decisions.

This feature addition triggered high-risk classification under Annex III.4 — the AI now influences consequential employment decisions about individual employees. The company had not reclassified the system because no formal product decision had been made to “enter” the high-risk AI space. The feature simply evolved from aggregate analytics to individual scoring.

Outcome: They conducted an emergency reclassification in Q1 2026 and began an accelerated compliance program. The lesson: classification is a living determination, not a one-time event tied to initial product launch.

Frequently Asked Questions: EU AI Act Classification

These are the classification questions most frequently raised by technical teams, legal counsel, and compliance officers working through the EU AI Act.

How do I know if my AI system is high-risk under the EU AI Act?

Your AI system is high-risk if it meets two conditions simultaneously. First, it must operate within one of the eight Annex III sectors (or be embedded in an Annex I regulated product). Second, it must make or significantly influence consequential individual-level decisions — decisions that affect someone’s employment, access to services, educational opportunities, or fundamental rights.

Both conditions must apply. A healthcare analytics AI that provides aggregate population data without influencing individual patient decisions is likely not high-risk. Conversely, the same hospital’s AI that recommends individual treatment paths is almost certainly high-risk.

What is the difference between high-risk and limited-risk AI under the EU AI Act?

The difference is substantial in both scope and cost. High-risk AI must satisfy seven distinct compliance requirements: risk management, data governance, technical documentation, record-keeping, transparency to deployers, human oversight, and accuracy/cybersecurity. This requires significant engineering, legal, and governance investment — and conformity assessment before EU market placement.

By contrast, limited-risk AI only requires transparency obligations — primarily disclosing to users that they are interacting with AI. The compliance effort is minimal compared to high-risk. Consequently, the classification distinction has major practical implications for your budget and timeline.

Does a chatbot qualify as high-risk AI under the EU AI Act?

Most general-purpose chatbots fall into the limited-risk tier. They must disclose their AI nature to users, but face no high-risk compliance obligations. However, function determines classification — not form. A chatbot that screens job candidates and ranks them for HR review is performing a high-risk function under Annex III.4, regardless of its conversational interface.

Therefore, always classify based on what the system does and what decisions it influences — not based on its technical format or user interface.

What happens if my AI system is misclassified?

Misclassifying a high-risk system as lower-risk exposes you to significant regulatory and commercial risk. The regulatory consequence is failing to meet mandatory compliance requirements for a high-risk system — which carries fines up to €15 million or 3% of global annual turnover. Additionally, market withdrawal orders can stop EU revenue immediately.

Moreover, regulators assess whether misclassification was deliberate. However, demonstrating good faith requires documented evidence that you conducted a serious, systematic classification process. An undocumented classification decision offers no protection.

Is a recommendation algorithm high-risk under the EU AI Act?

Most recommendation algorithms are minimal-risk. Entertainment, e-commerce, and content discovery recommendations do not make or influence consequential individual-level decisions about people’s rights or access to services. Consequently, they face no mandatory compliance obligations.

However, there are exceptions. A recommendation algorithm that surfaces job opportunities or suggests credit products to individuals may be closer to the limited-risk or high-risk boundary, depending on how directly it influences individuals’ access to those services. The Decision Impact Test applies: is the AI influencing consequential access decisions for individuals?

Does the EU AI Act classification apply to AI used internally within a company?

Yes — internal AI tools are not exempt from EU AI Act classification. Specifically, AI used by businesses for internal professional purposes — including tools that only affect employees — falls within the Act’s scope. An internal performance management AI that influences promotion decisions is high-risk under Annex III.4, even though no external customers ever interact with it.

This is one of the most commonly misunderstood aspects of the Act. Internal HR AI, internal credit or budget allocation tools, and internal surveillance or monitoring systems all require classification analysis — not just customer-facing AI products.

After Classification: What to Do Next

If Your AI System Is Prohibited

Stop all deployment and development immediately. Do not attempt to restructure the system without qualified legal advice. Document the prohibited practice identified and the date of identification. Engage EU AI Act-specialized legal counsel before making any operational or product changes. The August 2026 deadline does not apply to prohibited AI — these systems were illegal as of February 2025.

If Your AI System Is High-Risk

First, record your classification decision formally using the documentation checklist above. Then, begin working through the seven compliance requirements. Specifically, the next step in your compliance journey is building your risk management system and starting Annex IV technical documentation.

For a complete guide to all seven requirements and a 90-day compliance action plan, read our EU AI Act Compliance Guide. Additionally, if your team needs guidance specifically on technical documentation requirements, see our cluster article on EU AI Act Documentation Requirements.

If Your AI System Is Limited-Risk

Implement the required transparency disclosures. Ensure your chatbots clearly identify themselves as AI. Label all synthetic AI-generated content. Inform individuals when emotion recognition systems assess them. Additionally, review your user interface and terms of service to ensure disclosures are prominent, clear, and delivered at the right moment in user interactions.

If Your AI System Is Minimal-Risk

No mandatory actions are required. However, consider whether voluntary best practice adoption — AI governance documentation, internal ethics review, and periodic classification re-evaluation — is appropriate for your risk profile and enterprise customers’ expectations. Furthermore, record your minimal-risk classification decision with supporting rationale, so you can demonstrate it was a deliberate, informed determination rather than an oversight.

💡 Classification Review Triggers — Set These Now

Your classification is not permanent. Set calendar reminders or product lifecycle triggers for classification review under these conditions:

- Any change to the system’s intended purpose or primary use case

- Deployment in a new country, sector, or user population

- A significant model update, retraining, or architecture change

- Integration with a new data source that changes decision inputs

- New guidance published by the European AI Office on relevant sectors

- Acquisition of a new AI tool or vendor relationship

- Annually, regardless of any specific change trigger

Classification is the foundation of your entire EU AI Act compliance strategy. Get it right, document it carefully, and revisit it regularly. Every compliance decision downstream — from resource allocation to technical architecture — flows from this starting point.

For the complete picture of what high-risk AI compliance requires in terms of timelines, penalties, and organizational readiness, return to our EU AI Act Compliance Pillar Guide.

Next in this cluster series: EU AI Act Documentation Requirements: What You Actually Need to Prepare — covering the complete Annex IV technical documentation requirements for high-risk AI systems.

Not Sure Where Your AI Falls? Use Our Classification Tool

Download our free AI System Classification Worksheet — a structured template that walks you through every classification step and generates a documented classification record for each AI system in your inventory.